An interview with the cofounder of PhotoRoom

Matthieu Rouif talks about the practical applications of AI magic in commercial and resale photography—and how to maintain focus in a surging field.

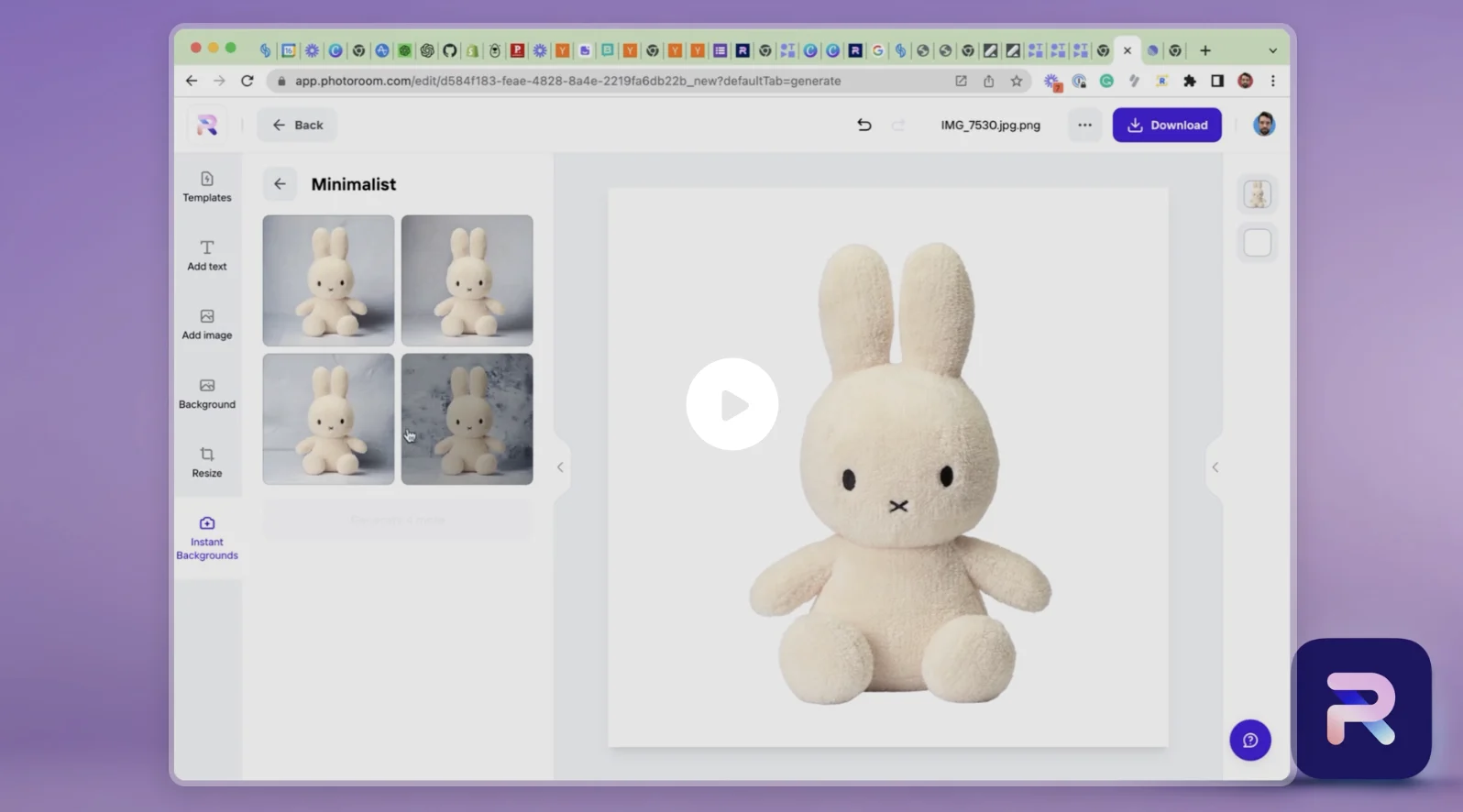

Even before image generators like Midjourney and DALL·E began making headlines, the Paris-based startup PhotoRoom had already become an AI success story. Launched in 2020 by former GoPro product manager Matthieu Rouif and machine learning engineer Eliot Andres, the company’s initial offering was a background removal app that immediately became a hit with online sellers who needed an efficient way to edit product images.

The company’s app has since been introduced in 28 languages, downloaded more than 40 million times, and replicated in web and API formats. It has been enhanced with several other tools, including an AI-driven “instant background” component that creates custom backdrop images from textual and visual prompts. Individual sellers and small businesses using PhotoRoom can generate high-quality product art that until recently would have required thousands of dollars in shooting and editing costs. (A PhotoRoom Pro subscription costs $9.99 per month per user.)

PhotoRoom has been a Stripe user since 2021 and announced a new $19 million investment round in November 2022. Stripe spoke to Rouif about how a trip to McDonald’s changed the course of his business, why he believes image customization will only become more important as global commerce becomes more personalized, and how he maintains focus amid the intense acceleration of the AI industry.

Eliot Andres and Matthieu Rouif, cofounders of PhotoRoom

Why would AI photo editing be important enough to individual sellers to require a specialized app or subscription service?

There are hundreds of millions of people in the world today who sell products or have their own business—and what their customers see when they are shopping is an image, usually on mobile. Like 72% of ecommerce is mobile. Even businesses that sell from a physical store need images on Google Maps or Instagram to attract customers.

What PhotoRoom does is turn those images into ones that are not only beautiful, but inspire trust. What we ask is, how do you build the best visual and the best imagery to convey what the product is and to inspire trust for your customers?

Speaking of trust and authenticity, a frequent criticism of AI images is that they look too polished or shiny. Is that a concern for you?

Some of our partner feedback when we pitched a demo a few years ago was to say that PhotoRoom does “useful AI.” Midjourney and DALL·E are very aesthetic, but very, as you say, like, too good to be true. So our generative AI is around the product—maybe just a white background with a simple shadow and a reflection on a surface. But we don’t touch any pixel of the product. We take a product photo, we remove the background and regenerate the other pixels, but not the product. It's very important for the resellers that you keep all the quality, all the defects, of the product. And then we regenerate the rest to make it realistic. We’re doing minimalist gen AI, I guess.

LLMs are getting a lot of attention right now. Do those advances figure into what you do?

What I'm really excited about, and what I think is going to move in the coming months, is what the industry calls multimodal input. The idea would be, for us, that you would input product photography, and also some text to describe what you would like to see, and then you get a result that merges the two inputs. And up to now all the models have been single modal—image input, or text input, or text-to-image. In doing user interviews, we’ve realized that doing text-only prompts takes too long on a smartphone, and there’s kind of this fear of the blank page, like when you have to start from nothing, people don't know what to write.

You tweeted that it’s important to PhotoRoom to own its ML stack. Can you explain that?

The case for PhotoRoom is that we build on top of foundational generation models like Stable Diffusion but add value using feedback from the user. What's important for them? Is it high quality or speed? What kind of hardware acceleration do you want to do? Having a very strong machine learning team allows you to make product choices to optimize the result for your end users.

So what do your particular users want that might be different from the average person?

We know our users want quality. In ecommerce the goal is really to get it 100% right. If you're doing like 10,000 images as an ecommerce owner, then one or two percent error is hundreds of photos that you would have to edit manually even if each fix is easy on an individual basis. So it's worth taking one or two extra seconds of processing time, adding very big transformer models on machine learning, to have a perfect result. And if you just take your stack off the shelf, you can’t do this.

How did you start working with Stripe?

We started with mobile apps and had billing through the App Store and Play Store. But we also wanted to be ubiquitous, to serve people on both mobile and the web, and Stripe for us was the best solution. It inspires trust and it's very easy to set up as a developer. Stripe Tax also helped us a lot when making the choice to use Stripe because we sell globally and we need to understand what we have in each country. On top of that, what we really valued is the idea of owning the relationship with the customer.

Do you see yourself doing business with enterprise companies in the future?

One of the reasons we chose Stripe, actually, is that we wanted to move to an API. And we launched a background removal API last November. And now the API has generative AI like what we have in our app, so we're starting to talk to big ecommerce sites and marketplaces that want to automate the process.

I think PhotoRoom is quite unique in the sense that we have this big audience of producers, and we can build from their feedback to improve the quality of our algorithm, and then we can go to bigger companies and have the level of quality that they are requiring. Tens of millions of users on the mobile app is an amazing playground for us to test the new tech, get feedback, and then improve the quality for bigger ecommerce marketplaces.

A lot of AI startups believe that their products can transform the most basic parts of our lives. Does PhotoRoom have that kind of ambition?

We would like to help with anything you need for commerce that’s visual, and I think we’re going in a direction in ecommerce that, as a merchant, you can create different visuals for different personas. Let’s say you're selling furniture—you can show a modern design setup, or you can create a cozy living room, to showcase the same product. And you could show these different visuals to different users, even depending on what time of day it was. So we'd like to go into, for example, A/B testing for images and visuals. We want to tell you which image you're going to sell best with for each of your customers, then help you create it.

You are right in the middle of what is probably one of the most exciting and fast-moving technology revolutions that has ever taken place. What does it feel like, as an entrepreneur, to build something that scales at hyperspeed, given the way global business is embracing AI?

I feel like a five-year-old at Christmas. I'm not sure which present to open first. In these situations, it's easy to be distracted and start building different things every week. Fortunately, my cofounder Eliot is excellent at keeping us focused. We focus on commerce photography and keep a list of things we won't build each quarter. While we may miss some opportunities, like AI avatars, it wouldn't serve our mission and has saved us from getting caught up in all the generative AI craziness.

I'm old enough in tech to have gotten started during the last revolution, the mobile one. I attended the first iOS class at Stanford and helped create the first app that sent postcards from a smartphone in 2009. People want to send pictures of their holidays to each other—but sending postcards from a smartphone was a miss. Instagram was the hit. My biggest takeaway from that experience was the importance of not building old things with new tech, but building new things with new tech.