Sorbet: Stripe’s type checker for Ruby

By 2017, Stripe had grown to the point where hundreds of engineers had written millions of lines of code. Most of that code was—and still is—written in Ruby, which is famous for helping engineers iterate quickly (if somewhat notorious for encouraging inscrutable code). Unfortunately we were starting to see Ruby come apart at the seams: new engineers found it hard to learn the codebase, and existing engineers were scared to make sweeping changes. Everyone faced a constant tradeoff: run the fast, local tests which might not catch many breakages, or run all the tests, even the slow ones. Ruby was becoming a source of friction more than a source of productivity.

We set out to change that, with two goals in mind: make it easier to understand the code, while doubling down on what makes Ruby productive and delightful. This was the backdrop against which we decided to create and open source Sorbet, a fast, powerful type checker designed for Ruby. Sorbet statically analyzes a codebase, builds up an understanding of how each piece of code relates to every other piece, and then exposes that knowledge to the programmer via type errors, autocompletion results, documentation on hover, or jumps between definitions and usages.

Today Sorbet runs over Stripe’s entire Ruby codebase, currently amounting to over 15 million lines of code spread across 150,000 files. We can't take credit for pioneering the idea of adding static types to a dynamically typed language—Microsoft and Facebook popularized the approach with TypeScript and Hack, respectively. However, we thought it was worth sharing how Sorbet has not just met but exceeded our goals in the almost four years since we first enabled it on our Ruby codebase.

Sorbet reinforces the delightful bits of Ruby while making engineers more productive. Not only has it made code easier to understand, it’s even helped shape and reinforce Stripe's engineering culture as we've grown. But before we dive into what makes Sorbet… Sorbet, let’s take a short step back in time to its origins at Stripe.

A brief history of Sorbet inside Stripe

Type annotations arrived in Stripe's Ruby codebase as early as November 2016, almost a full year before work began on Sorbet. These annotations were born out of a desire to encourage engineers to write modular units with clear public interfaces. Here's an example test case from the pull request that introduced type annotations:

Neither Sorbet nor any other static type checker existed to consume these type annotations yet; they existed only at runtime. The declare_method call above acted like a decorator on the def call method: it would check that the msg argument given to call was a String and that call returned a String on every invocation. Throughout the next year, these runtime-only annotations spread throughout Stripe's codebase.

Months prior we had added Flow, a static type checker for JavaScript, to our frontend codebase. Ruby developers quickly grew envious and kept asking us what it would take to get the same features for Ruby. We staffed an effort to figure out what it would take to either adopt one of the two in-progress Ruby type checkers—RDL and TypedRuby—or to build our own. RDL proved to be powerful, but too slow1. TypedRuby was faster, but had bugs that would have required a near-rewrite to solve2. So in November 2017, we began writing Sorbet from scratch. Six months later, in May 2018, Sorbet type checking became required in Stripe’s automated test suite. After another year of internal adoption, we released Sorbet to the world in June 2019.

In all that time a lot has changed—Sorbet has far more features today than we ever imagined back then. But there's been one constant driving force behind the project: building tools that make engineers working in Ruby more productive.

Supercharged productivity in Ruby

When we ask how Sorbet makes people more productive they tell us all sorts of things, but the most common theme is raw speed.

Sorbet gives near-instantaneous feedback while editing: for 80% of edits, it can finish reporting type errors in milliseconds, even in our multi-million line codebase. The longest error reporting wait times measure in seconds. Types aren't a replacement for tests, but few test suites are fast enough to run on every edit like Sorbet.

But there's more to it than just speed: Sorbet takes the toil out of understanding how code fits together.

On the day we rolled out the Sorbet-powered VS Code extension for Ruby, Justin Duke described the feeling better than anyone:

Having just spent the past few minutes clicking around VSCode like a kid on Christmas morning, I don't think it's an exaggeration to say that this might be the single largest improvement in my pay-server [Stripe's Ruby codebase] productivity since joining Stripe.

In a large codebase, Ruby can be uniquely hard to understand, even among other dynamically typed languages. What's worse is that it's hard to just, say, lint against the features that make Ruby hard to understand, because many of them are Ruby's most loved features. Here are some of the features that can make a Ruby codebase hard to unravel:

Ruby lacks import statements (like those in Python or JavaScript), which bind global names to file-scoped names. Instead, Ruby provides require statements, which merely run other Ruby code. This mechanism works kind of like #include statements in C and C++: a single require statement might hide implicit calls to hundreds of other require statements.

But this feature enables Rails’ famous “convention over configuration” approach to project layouts, which many people love about it, you don't have to import files in Rails, you can just reference the code you want to reference.

Ruby encourages factoring code into modules, which can then be mixed into classes or even other modules. When used well, modules can help organize code into composable, testable units.

But on the other hand, overuse of modules obscures where a method is defined behind a deep ancestor hierarchy. New Stripe engineers working in our codebase frequently struggled to find a method’s definition when it came into scope from behind multiple layers of modules.

Ruby embraces metaprogramming, which is when methods and objects are dynamically created by code itself, instead of directly by the programmer. Concretely, this means that while some methods are written literally like def invoices; ...; end, others are defined dynamically by calling a library function like has_many(:invoices). Metaprogramming as a way to share code is one of the biggest reasons why projects like Rails have been so successful.

Unfortunately, metaprogramming is very opaque. It prevents simple regular expression searches from surfacing method definitions. Once a definition is found, the programmer still has to trace through code to know things like what arguments the method takes.

We built Sorbet to make it easy to navigate and understand a codebase without having to give up these features people love about Ruby. The key, more than just reporting type errors quickly, is to offer a powerful editor extension, which provides ever-present answers to common questions. The answer to “where is this class defined?” is a click away, not hidden behind multiple require statements. “How am I supposed to use this method?” fades as a flick of the cursor reveals the method's types and documentation, replacing a lengthy crawl through a class's transitive mixins. Instant responses from Sorbet mean less time toiling and more time discovering.

Building Sorbet in a way where it delivers type errors and IDE responses so fast comes from a set of design choices we made early on in its development. First, Sorbet is written in C++, not Ruby. To quote Nelson Elhage, one of the founding members of the team, "Writing in C++ doesn't automatically make your program fast, and a program does not need to be written in C++ to be fast. However, using C++ well gives an experienced team a fairly unique set of tools to write high-performance software." C++ gives us great baseline performance and a lot of headroom for further improvement when we decide that it's critical to make a given component of Sorbet fast.

Another key element of why Sorbet is fast is that we deliberately chose a simple type inference algorithm. Specifically, Sorbet only does local type inference, so the result of type checking one method never affects the result of type checking another method. This inference algorithm is a pure function of the code inside a method and Sorbet's immutable indexes of what's defined where. Put this all together, and Sorbet's inference algorithm is embarrassingly parallel, scaling to as many cores as the machine has available while being able to use fast shared memory instead of copying large data structures.

A bedrock for engineering values

In addition to the productivity boost, an unintentional benefit to come out of adopting Sorbet has been its cultural impact. In a fast-growing company, communicating and codifying cultural norms can be a full time job on its own! Sorbet lends concrete structure to some of Stripe's engineering norms.

Consider the cultural norm “Stripe should grow more reliable over time.” Despite our best efforts, production incidents happen—our goal when an incident happens is to make sure the same one doesn't happen again. After years of using Sorbet, Stripe engineers reflexively reach for type annotations as a preventative tool when doing incident remediations.

As an aside, it's interesting to reflect on the classes of problems that simply don't happen at Stripe anymore (or if they do, they happen exceedingly rarely). For example: typos that used to manifest as NameError: uninitialized constant exceptions in production have been entirely replaced by static type errors. But even some more subtle problems are absent, like this one:

Does this method need to be passed a string invoice ID, or a full invoice object? Scanning the implementation for context clues can sometimes help, but type annotations replace guesswork with machine-checked assurances:

View in the Sorbet Playground →

This brings up another norm: “public interfaces should have up-to-date documentation.” For this we use a clever trick about how Sorbet's strictness levels work. Sorbet activates in files with # typed: true comments at the top of the file, but only in a “best-effort” mode: type annotations aren't required and all methods behave as though their arguments were annotated with T.untyped. But by trading up to # typed: strict, Sorbet stops assuming T.untyped and instead requires signatures for all methods.

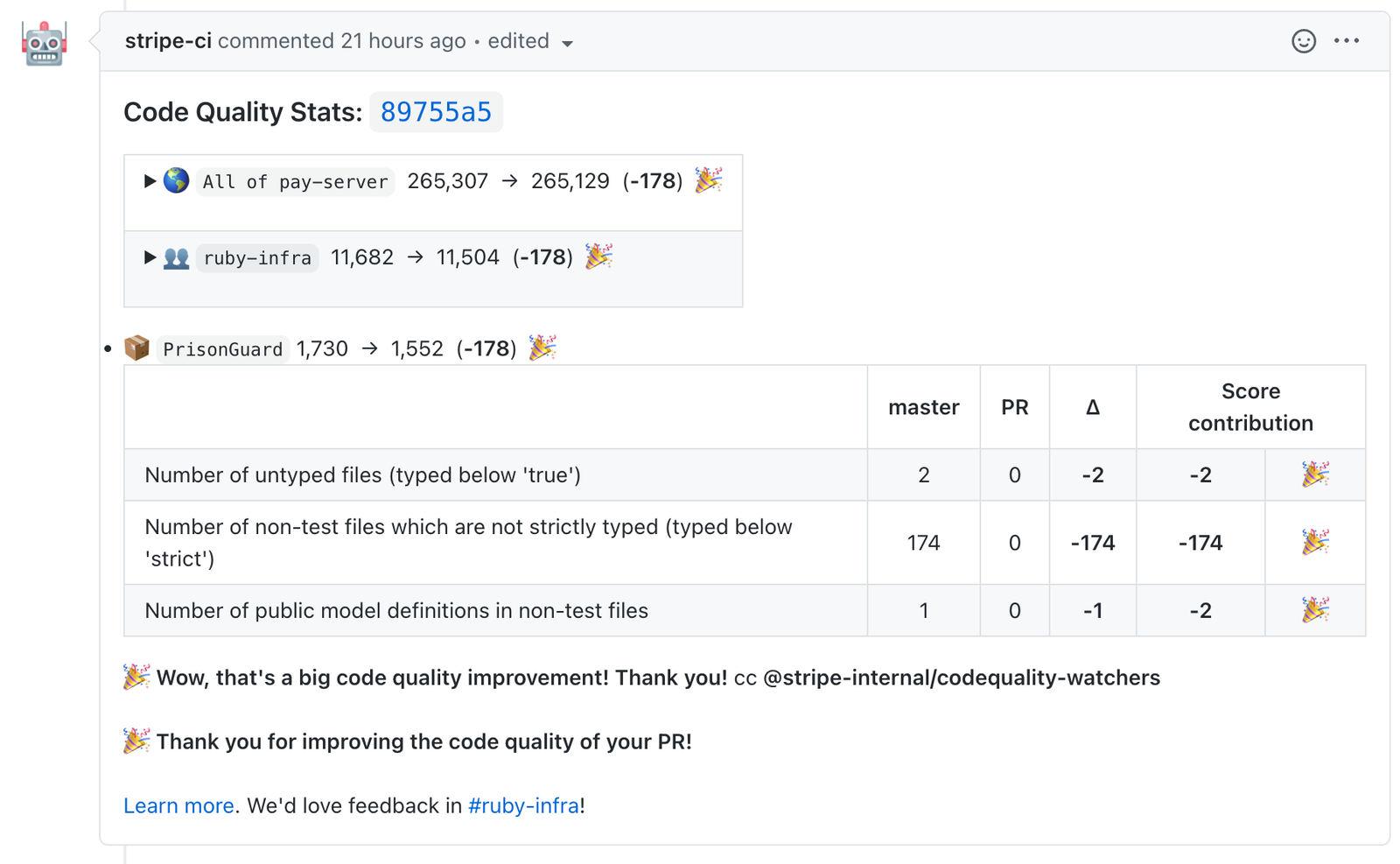

To encourage this, Stripe’s continuous integration (CI) system looks through all code changes and leaves a “Stripe code quality score” in a comment on the pull request, like this one:

The score is reported as a weighted sum of signals, where a smaller score is better. There are a lot of inputs to the score, and we hide the ones that don’t change in a given pull request, but the one relevant to Sorbet in the picture above reads, “Number of non-test files which are not strictly typed (typed below strict).” This means both the author and reviewer get a heads up when new files aren't using # typed: strict, reminding them that at Stripe we really prefer all Ruby code to be type-annotated. After almost 4 years of Sorbet at Stripe, 85% of all non-test files opt into # typed: strict (and for that matter, over 95% of all files are # typed: true).

We often say that most of Stripe's engineers haven't been hired yet. Tooling like Sorbet encodes lessons learned over the years and helps teach these lessons to new engineers in a hands-on environment. As we continue to grow, especially distributed around the globe, Sorbet will continue to serve as a concrete reference point for new and old coworkers to align on shared engineering values.

Ruby fits in alongside a handful of other languages in use at Stripe. Stripe is also deeply investing in building new product backends in Java, building delightful frontend experiences with TypeScript, and various pieces of infrastructure in Go. Stripe commits to staffing high quality development experiences across all of these languages, not just Ruby. Making strategic investments in tooling ensures engineers at Stripe write code that is safe and fast as we scale.

After all this time, Sorbet is still gaining features, performance improvements, and bug fixes. We love that Sorbet lets us enhance Ruby's natural productivity while helping shape Stripe's code to be resilient and understandable as we grow. As we approach 5 years since Sorbet's conception, we can't wait to see where the next 5 years will lead!

Sorbet is written in C++ and compiles to WebAssembly, which means you can try it out in your browser. Below you’ll find a link to the Sorbet Playground.

Get started with Sorbet

Our docs guide you through the process of adding Sorbet to your codebase.

Try Sorbet online

Play around with Sorbet’s type system and editor features, right in your browser.

Discover the Sorbet community

Come chat with other Sorbet users, working on large and small codebases alike.