Product

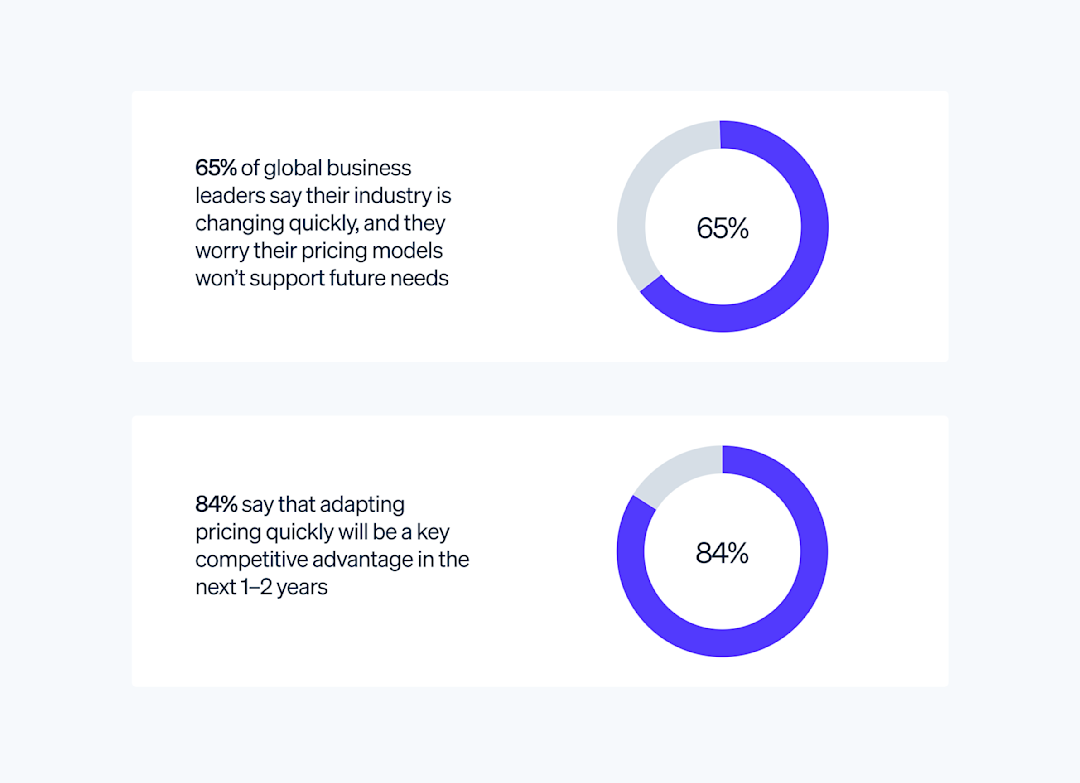

Analyzing how SaaS platforms are shipping payments and finance products in days

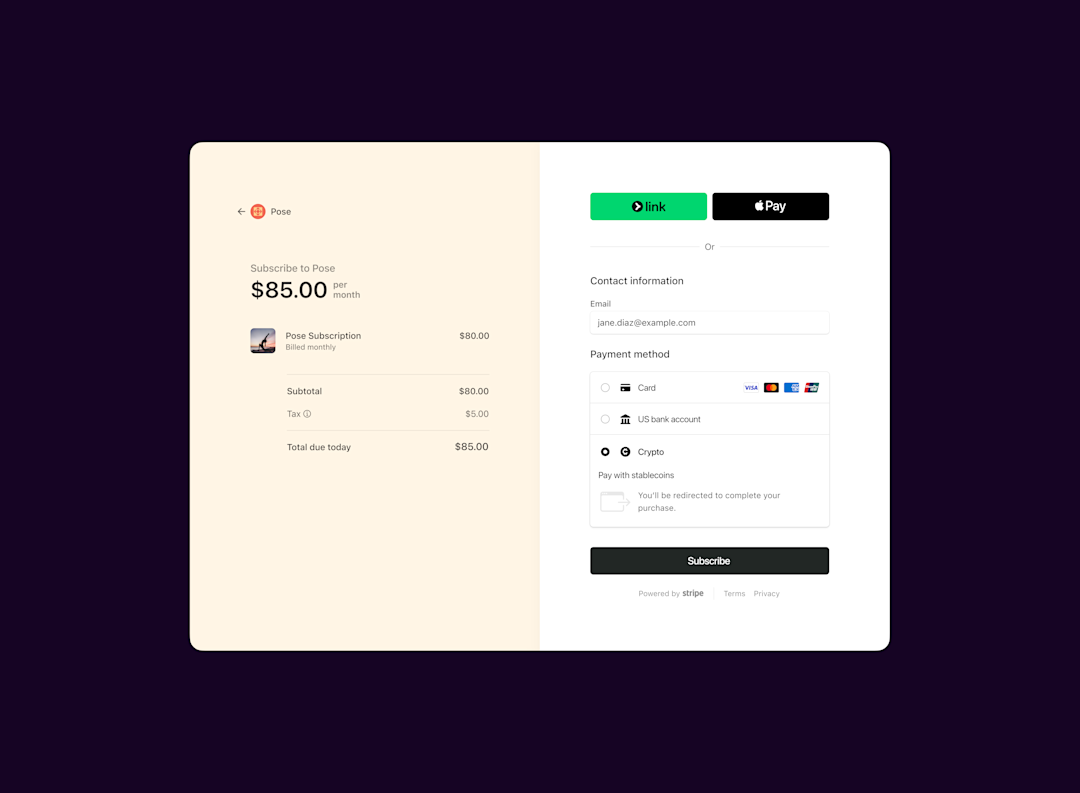

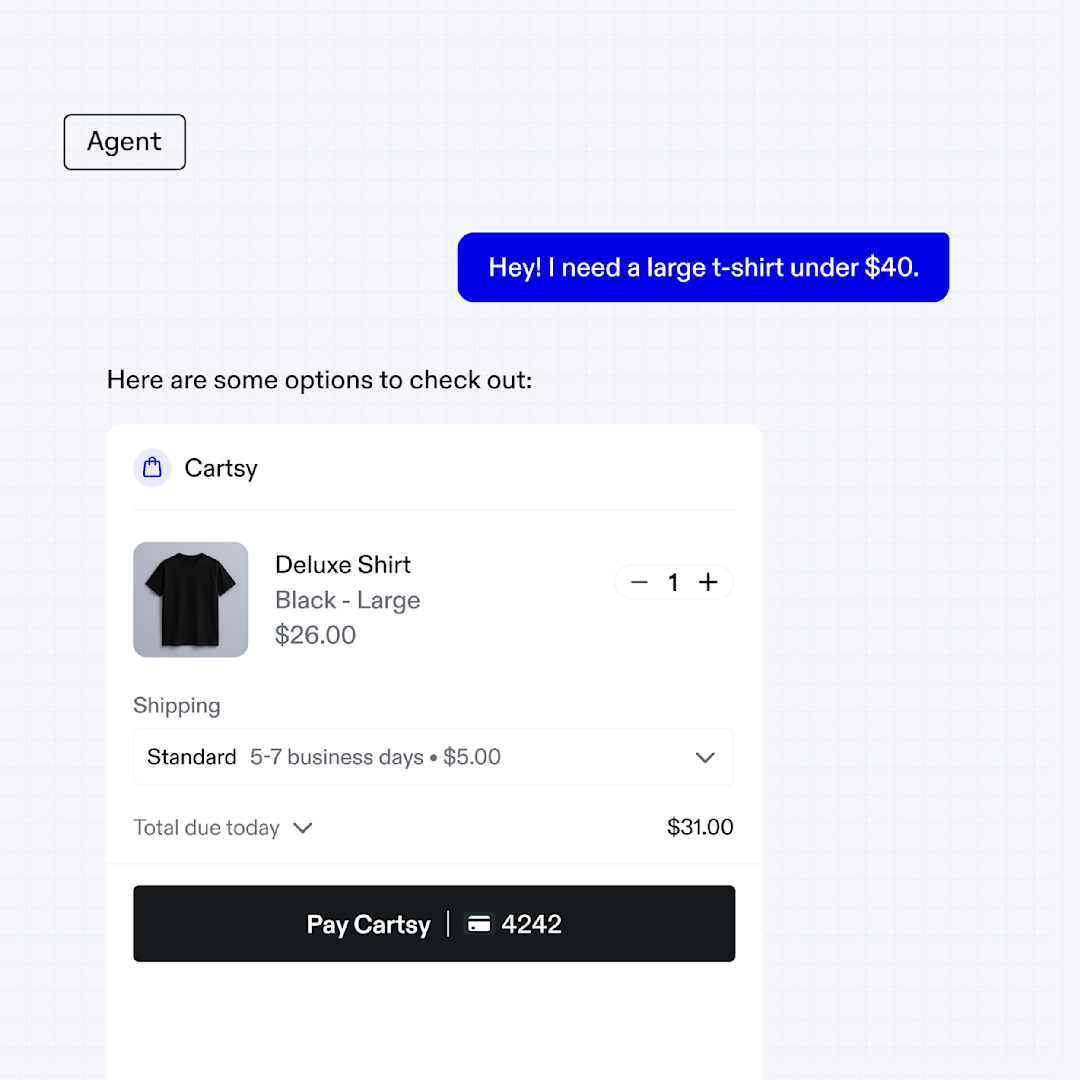

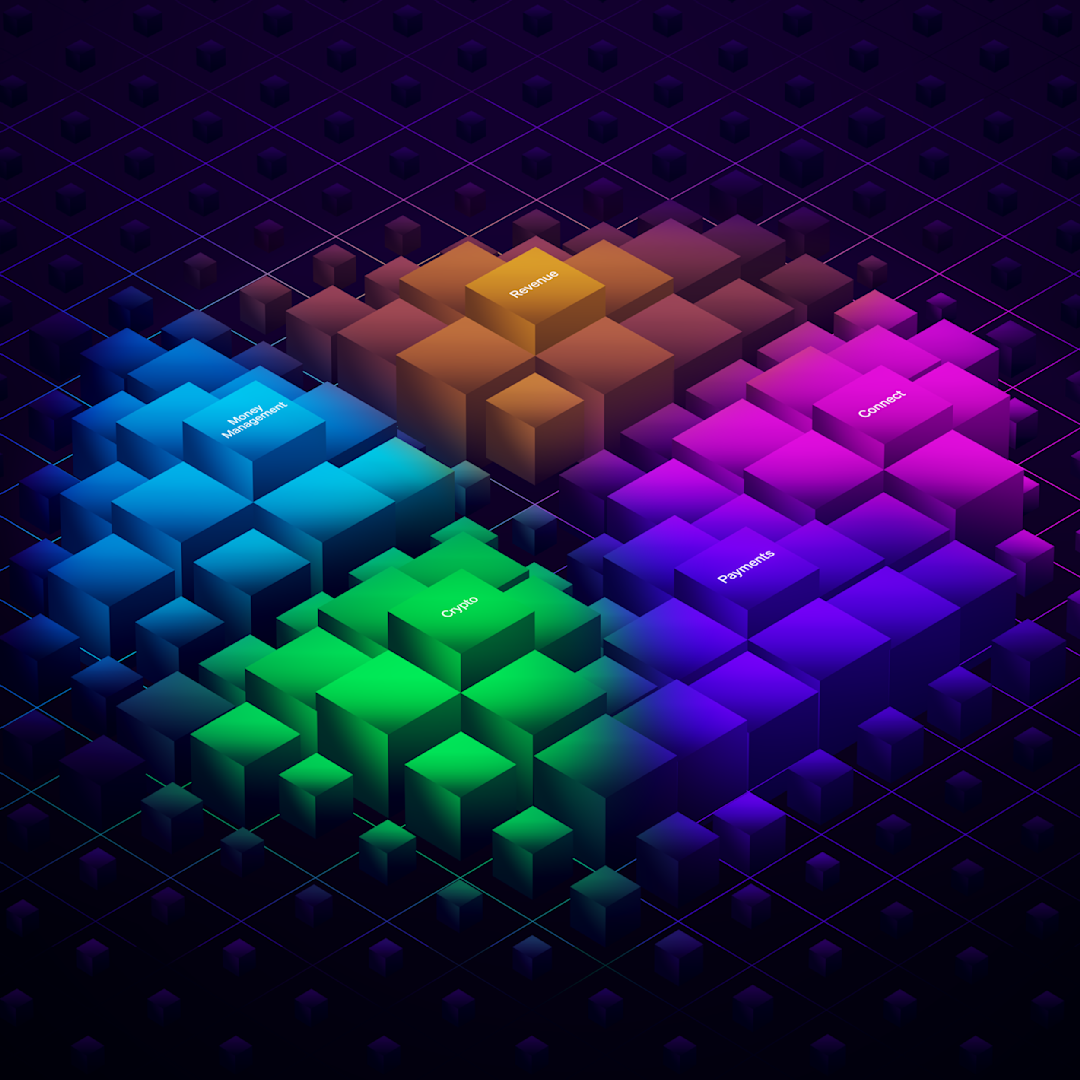

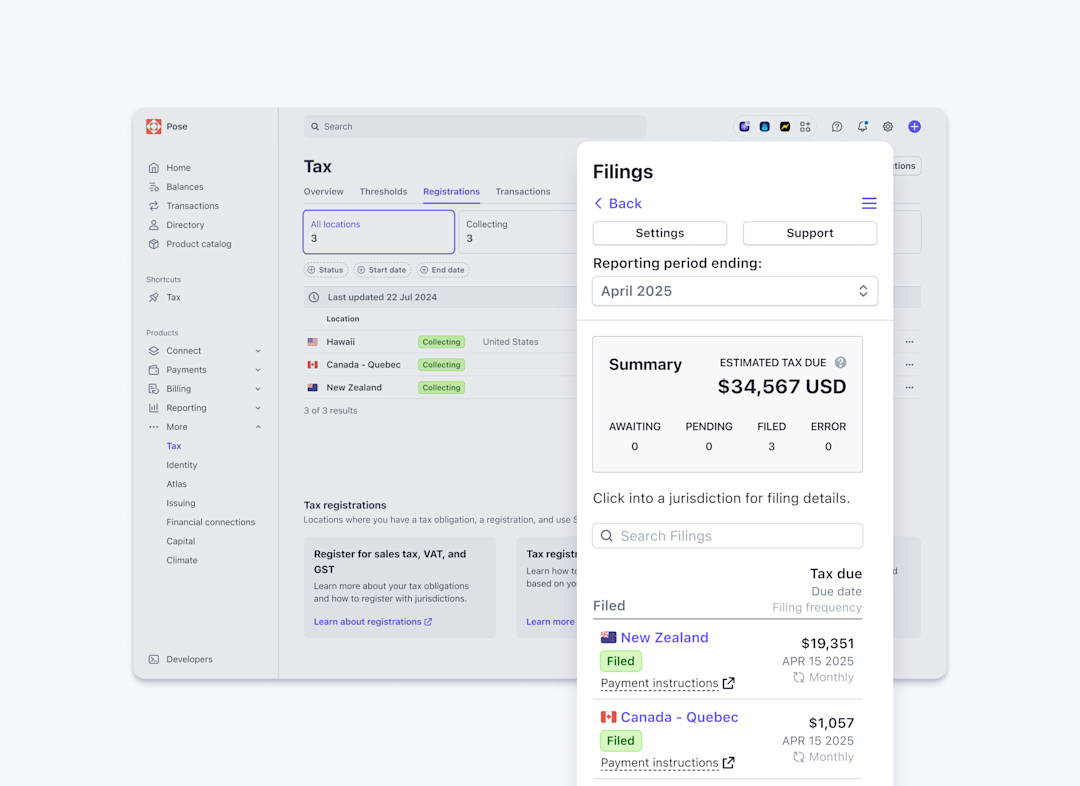

Leading SaaS platforms across industries—from Squarespace to Jobber—are using embedded components to ship payments and finance features with minimal code. We analyzed the data to see which types of platforms are getting the most value.